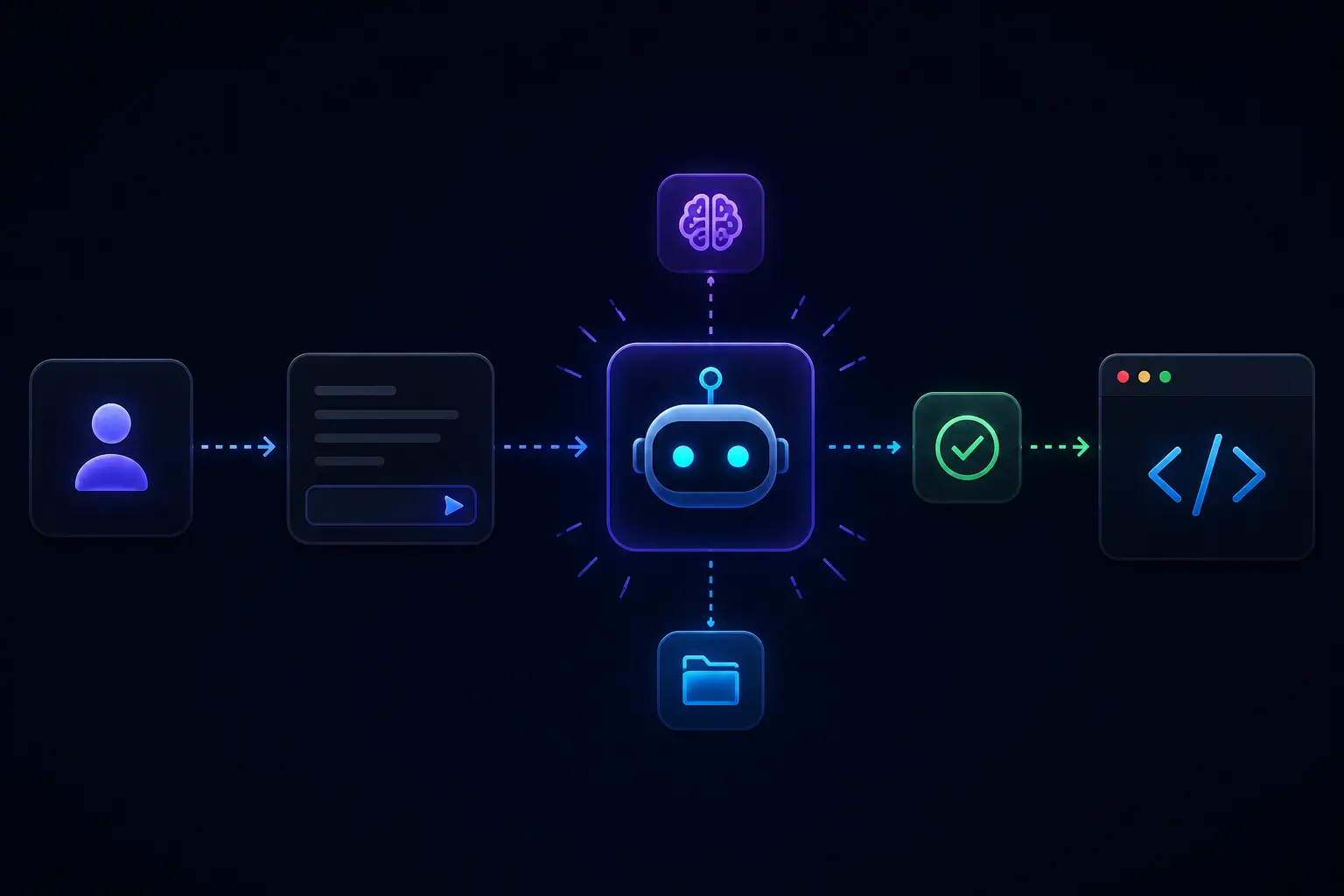

AI Support Bot

RAG-style chat over your own knowledge base, with a full-stack demo and evaluation hooks

This project is a chat UI backed by FastAPI, LangChain, ChromaDB, and an OpenAI-compatible model path, wired for retrieval-augmented answers from custom knowledge you load. The README states responses are scored on dimensions such as relevance, accuracy, coherence, completeness, creativity, tone, and intent alignment: a QA-oriented lens, not a guarantee of correctness.

When it is useful

You are prototyping internal help bots, demoing RAG to stakeholders, or learning how chunking, retrieval, and prompting fit together. Run it with Docker Compose as documented; configure secrets in the backend environment file.

What you can do

- Load or refresh knowledge sources using the project’s flow so answers stay tied to your material.

- Chat through the web front end and inspect how the app surfaces answers.

- Extend storage or logging; the README notes pending work like a durable store for queries and responses.

Limits

- Wrong or harmful answers remain possible; use human escalation for regulated, safety-critical, or high-stakes topics.

- Evaluation scores are heuristic; they do not replace human review or compliance sign-off.

- Cost, retention, and data residency for embeddings and logs are your operational decisions.