insightX

Upload images for automated checks: sensitive-content screening and object detection

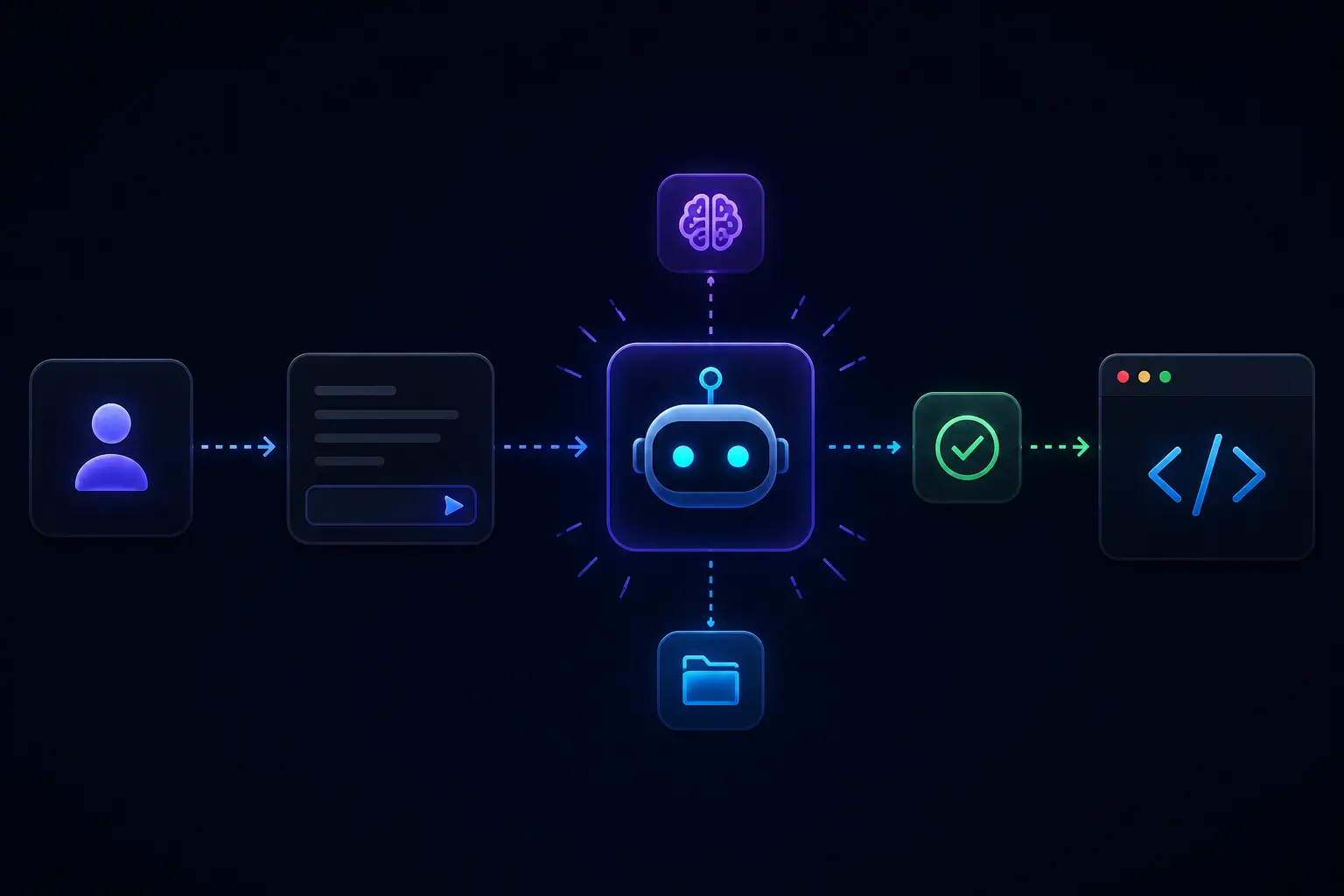

insightX splits the work across a web UI, API, and background workers that analyze images you send through it. The repository documents NSFW-oriented screening (via a dedicated model) and object detection with bounding boxes (via YOLOv8), plus storage that can target local object storage or cloud object storage depending on configuration.

When it is useful

You are experimenting with moderation pipelines, need a lab environment to see how detections and annotated previews behave, or want a starting point to fork for internal tooling. It is not a certified compliance product on its own.

What you can do

- Submit images through the UI and review results and confidence-style scores as the app presents them.

- Run object detection that can produce annotated visuals (bounding boxes) alongside structured findings.

- Operate the stack with the container-based quick start in the repository; deeper client, API, and worker notes live in the GitHub project for contributors.

Limits

- Automated moderation is imperfect; models can miss harmful content or flag benign images. Use human review anywhere safety, employment, or legal outcomes matter, and follow local law and platform rules.

- Detection quality depends on models, thresholds, and your images; treat outputs as assistive, not ground truth.

- Running at scale means your ops choices (capacity, retention, access control, and cloud spend); the README describes components, not a managed service SLA.