LLM Text Evaluation Framework

Score model answers on seven criteria, chart trends, and keep a local history

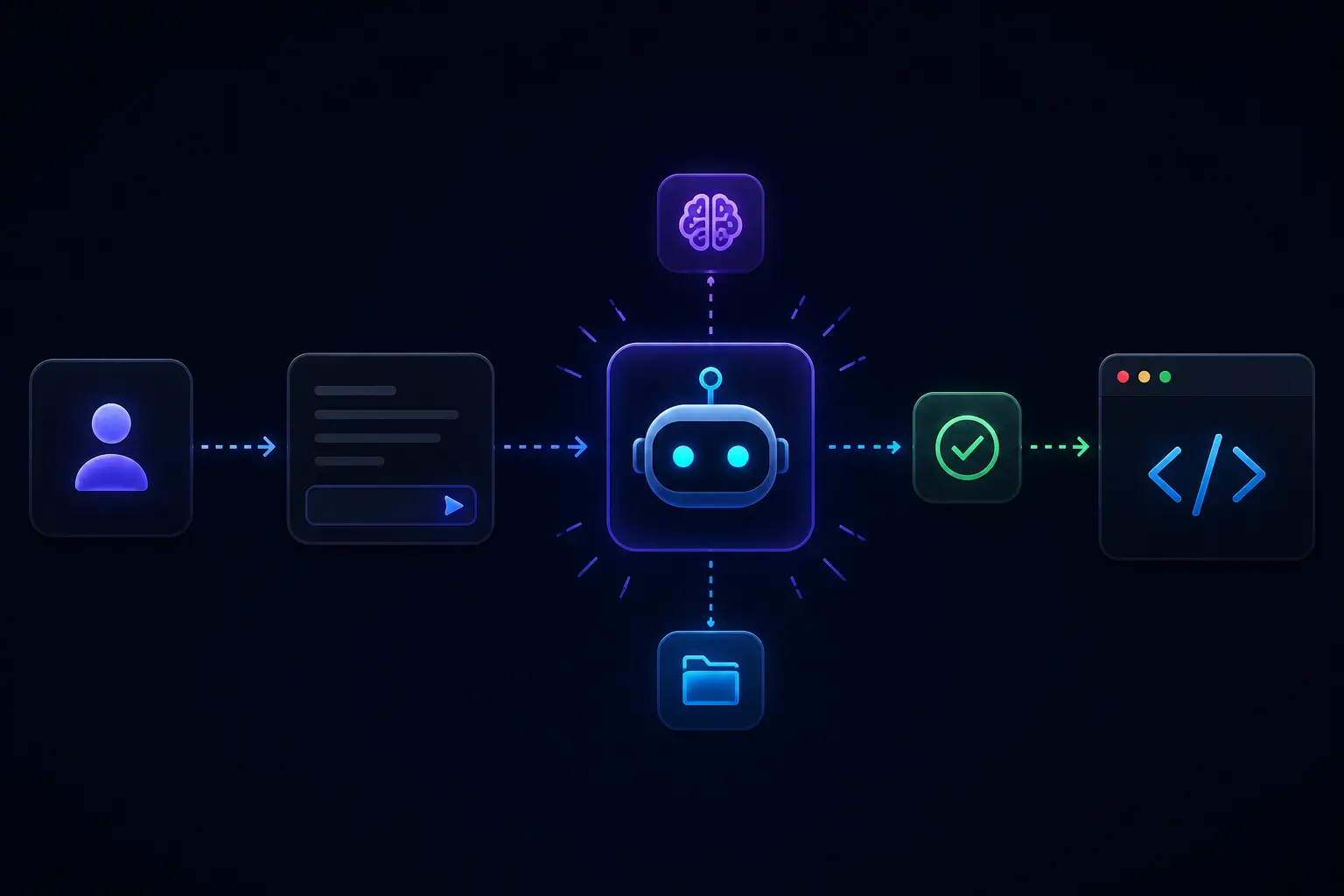

This Streamlit app helps you compare an LLM response to a reference answer using seven weighted dimensions named in the repository (relevance, accuracy, completeness, coherence, creativity, tone, and intent alignment). It stores runs in a SQLite database, shows Plotly visuals, and can be run with Docker or a local Python workflow.

When it is useful

You are benchmarking prompts or models, teaching structured evaluation, or need a repeatable notebook replacement with simple dashboards. It is a lab and QA helper, not an independent judge of truth.

What you can do

- Enter prompt, model output, and expected answer (terminology follows the app) and inspect per-criterion scores plus charts on the home experience.

- Open the history view to revisit past evaluations and compare patterns over time.

- Adjust weights in configuration as documented; criteria importance is configurable, not universal truth.

- Deploy or develop using the GitHub repository instructions (Docker compose or

uv+ Streamlit paths).

Limits

- Scores come from automated heuristics and models (similarity and text stats); they can disagree with human experts or favor certain writing styles.

- “Accuracy” inside the tool does not verify facts against the world; pair with human review for high-stakes or regulated content.

- Features marked as future in the README (batch exports, API, etc.) are not promised here.